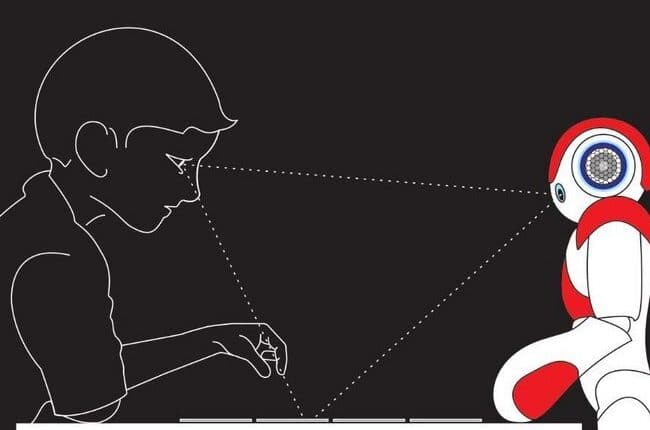

Using gazes for effective tutoring with social robots

Ph.D. candidate Eunice Njeri Mwangi of the department of Industrial Design has investigated how intuitive gaze-based interactions between robots and humans can help improve the effectiveness of robot tutors. The researcher successfully defended her PHD-thesis on Wednesday 28th of October 2020.

Advances in robotic technology have enabled robot integration into settings where they can interact collaboratively with human users. Robots are now gaining prominence in social spaces, such as education, therapeutic facilities for children, and nursing homes for the elderly. A promising use of social robots is in tutoring, where robots take the role of tutors or trainers, for example, in rehabilitation centers for children with special needs.

To improve interaction outcomes in tutoring and other persuasion tasks, intuitive interactions between robots and users are crucial. And while there has been considerable progress towards natural interactions between robots and humans, designing effective robot behavior to support human-robot tutoring interactions is still an open problem.

In her research, Eunice Njeri Mwangi, who is part of the Future Everyday research group at TU/e, focused on gaze-based interactions between humans and robots. A better understanding of these interactions can help improve the design of effective gaze-based interaction, which in turn will foster performance, social engagement, and mutual coordination in a tutoring setting.

Gaze behavior

To do so, Mwangi conducted a number of user studies designed to investigate the concepts of mutual coordination gaze-following and complex sequences of gaze behavior within robot tutoring interactions. In these studies, which included both laboratory and field-based experiments, human participants (adults or children) interacted with a tutor (human or robot) in a board game tutoring activity.

Through observational analysis and objective measurement techniques, including eye-tracking and lag-based methods, the researcher was able to examine the interactive gaze behavior in realistic tutoring settings.

From these studies, Mwangi concludes that effective gaze behavior which includes explicit, frequent and dynamic gaze cues from the robot can help build a shared understanding and mutual awareness between humans and robots, leading to positive outcomes in a tutoring interaction.

Guidelines

Overall, the findings from the user studies contribute to new design guidelines for gaze-based communications to improve learning performances and promote positive human-robot tutoring interactions.

Comments are closed.