Sentinel loads up with $1.35M in the deepfake detection arms race

Estonia-based Sentinel, which is developing a detection platform for identifying synthesized media (aka deepfakes), has closed a $1.35 million seed round from some seasoned angle investors — including Jaan Tallinn (Skype), Taavet Hinrikus (TransferWise), Ragnar Sass & Martin Henk (Pipedrive) — and Baltics early-stage VC firm, United Angels VC.

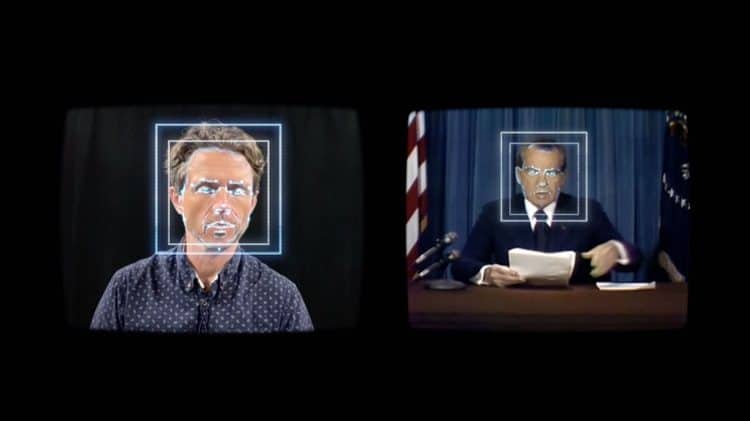

The challenge of building tools to detect deepfakes has been likened to an arms race — most recently by tech giant Microsoft, which earlier this month launched a detector tool in the hopes of helping pick up disinformation aimed at November’s U.S. election. “The fact that [deepfakes are] generated by AI that can continue to learn makes it inevitable that they will beat conventional detection technology,” it warned, before suggesting there’s still short-term value in trying to debunk malicious fakes with “advanced detection technologies.”

Sentinel co-founder and CEO Johannes Tammekänd agrees on the arms race point — which is why its approach to this “goal-post-shifting” problem entails offering multiple layers of defence, following a cybersecurity-style template. He says rival tools — mentioning Microsoft’s detector and another rival, Deeptrace, aka Sensity — are, by contrast, only relying on “one fancy neural network that tries to detect defects,” as he puts it.

“Our approach is we think it’s impossible to detect all deepfakes with only one detection method,” he tells TechCrunch. “We have multiple layers of defence that if one layer gets breached then there’s a high probability that the adversary will get detected in the next layer.”

Tammekänd says Sentinel’s platform offers four layers of deepfake defence at this stage: An initial layer based on hashing known examples of in-the-wild deepfakes to check against (and which he says is scalable to “social media platform” level); a second layer comprised of a machine learning model that parses metadata for manipulation; a third that checks for audio changes, looking for synthesized voices, etc; and lastly a technology that analyzes faces “frame by frame” to look for signs of visual manipulation.

“We take input from all of those detection layers and then we finalize the output together [as an overall score] to have the highest degree of certainty,” he says.

“We already reached the point where somebody can’t say with 100% certainty if a video is a deepfake or not. Unless the video is somehow ‘cryptographically’ verifiable… or unless somebody has the original video from multiple angles and so forth,” he adds.

Tammekänd also emphasizes the importance of data in the deepfake arms race — over and above any specific technique. Sentinel’s boast on this front is that it has amassed the “largest” database of in-the-wild deepfakes to train its algorithms on.

It has an in-house verification team working on data acquisition by applying its own detection system to suspect media, with three human verification specialists who “all have to agree” in order for it to verify the most sophisticated organic deepfakes.

“Every day we’re downloading deepfakes from all the major social platforms — YouTube, Facebook, Instagram, TikTok, then there’s Asian ones, Russian ones, also porn sites as well,” he says.

“If you train a deepfake model based on let’s say Facebook data sets, then it doesn’t really generalize — it can detect deepfakes like itself but it doesn’t generalize well with deepfakes in the wild. So that’s why the detection is really 80% the data engine.”

Not that Sentinel can always be sure. Tammekänd gives the example of a short video clip released by Chinese state media of a poet who it was thought has been killed by the military — in which he appeared to say he was alive and well and told people not to worry.

“Although our algorithms show that, with a very high degree of certainty, it is not manipulated — and most likely the person was just brainwashed — we can’t say with 100% certainty that the video is not a deepfake,” he says.

Sentinel’s founders, who are ex NATO, Monese and the U.K. Royal Navy, actually started working on a very different startup idea back in 2018 — called Sidekik — building a Black Mirror-esque tech which ingested comms data to create a “digital clone” of a person in the form of a tonally similar chatbot (or audiobot).

The idea was that people could use this virtual double to hand off basic admin-style tasks. But Tammekänd says they became concerned about the potential for misuse — hence pivoting to deepfake detection.

They’re targeting their technology at governments, international media outlets and defence agencies — with early clients, after the launch of their subscription service in Q2 this year, including the European Union External Action Service and the Estonian government.

Their stated aim is to help to protect democracies from disinformation campaigns and other malicious information ops. So that means they’re being very careful about who gets access to their tech. “We have a very heavy vetting process,” he notes. “For example we work only with NATO allies.”

“We have had requests from Saudi Arabia and China but obviously that is a no-go from our side,” Tammekänd adds.

A recent study the startup conducted suggests exponential growth of deepfakes in the wild (i.e. found anywhere online) — with more than 145,000 examples identified so far in 2020, indicating a nine-fold year-on-year growth.

Tools to create deepfakes are certainly getting more accessible. And while plenty are, at face value, designed to offer harmless fun/entertainment — such as the likes of selfie-shifting app Reface — it’s clear that without thoughtful controls (including deepfake detection systems) the synthesized content they enable could be misappropriated to manipulate unsuspecting viewers.

Scaling up deepfake detection technology to the level of media swapping going on on social media platforms today is one major challenge Tammekänd mentions.

“Facebook or Google could scale up [their own deepfake detection] but it would cost so much today that they would have to put in a lot of resources and their revenue would obviously fall drastically — so it’s fundamentally a triple standard; what are the business incentives?” he suggests.

There is also the risk posed by very sophisticated, very well-funded adversaries — creating what he describes as “deepfake zero day” targeted attacks (perhaps state actors, presumably pursuing a very high-value target).

“Fundamentally it is the same thing in cybersecurity,” he says. “Basically you can mitigate [the vast majority] of the deepfakes if the business incentives are right. You can do that. But there will always be those deepfakes which can be developed as zero days by sophisticated adversaries. And nobody today has a very good method or let’s say approach of how to detect those.

“The only known method is the layered defence — and hope that one of those defence layers will pick it up.”

It’s certainly getting cheaper and easier for any internet user to make and distribute plausible fakes. And as the risks posed by deepfakes rise up political and corporate agendas — the European Union is readying a Democracy Action Plan to respond to disinformation threats, for example — Sentinel is positioning itself to sell not only deekfake detection but bespoke consultancy services, powered by learnings extracted from its deepfake data set.

“We have a whole product — meaning we just don’t offer a ‘black box’ but also provide prediction explainability, training data statistics in order to mitigate bias, matching against already known deepfakes and threat modelling for our clients through consulting,” the startup tells us. “Those key factors have made us the choice of clients so far.”

Asked what he sees as the biggest risks that deepfakes pose to Western society, Tammekänd says, in the short term, the major worry is election interference.

“One probability is that during the election — or a day or two days before — imagine Joe Biden saying ‘I have a cancer, don’t vote for me.’ That video goes viral,” he suggests, sketching one near-term risk.

“The technology’s already there,” he adds, noting that he had a recent call with a data scientist from one of the consumer deepfake apps who told him they’d been contacted by different security organizations concerned about just such a risk.

“From a technical perspective it could definitely be pulled off… and once it goes viral, for people, seeing is believing,” he adds. “If you look at the ‘cheap fakes’ that have already had a massive impact, a deepfake doesn’t have to be perfect, actually, it just has to be believable in a good context — so there’s a large number of voters who can fall for that.”

Longer term, he argues the risk is really massive: People could lose trust in digital media, period.

“It’s not only about videos, it can be images, it can be voice. And actually we’re already seeing the convergence of them,” he says. “So what you can actually simulate are full events… that I could watch on social media and all the different channels.

“So we will only trust digital media that is verified, basically — that has some method of verification behind that.”

Another even more dystopian AI-warped future is that people will no longer care what’s real or not online — they’ll just believe whatever manipulated media panders to their existing prejudices. (And given how many people have fallen down bizarre conspiracy rabbit holes seeded by a few textual suggestions posted online, that seems all too possible.)

“Eventually people don’t care. Which is a very risky premise,” he suggests. “There’s a lot of talk about where are the ‘nuclear bombs’ of deepfakes? Let’s say it’s just a matter of time when a deepfake of a politician comes out that will do massive damage but… I don’t think that’s the biggest systematic risk here.

“The biggest systematic risk is, if you look from the perspective of history, what has happened is information production has become cheaper and easier and sharing has become quicker. So everything from Gutenberg’s printing press, TV, radio, social media, internet. What’s happening now is the information that we consume on the internet doesn’t have to be produced by another human — and thanks to algorithms you can on a binary time-scale do it on a mass scale and in a hyper-personalized way. So that’s the biggest systematic risk. We will not fundamentally understand what is reality anymore online. What is human and what is not human.”

The potential consequences of such a scenario are myriad — from social division on steroids; so even more confusion and chaos engendering rising anarchy and violent individualism to, perhaps, a mass switching off, if large swathes of the mainstream simply decide to stop listening to the internet because so much online content is nonsense.

From there things could even go full circle — back to people “reading more trusted sources again,” as Tammekänd suggests. But with so much at shapeshifting stake, one thing looks like a safe bet: Smart, data-driven tools that help people navigate an ever more chameleonic and questionable media landscape will be in demand.

Comments are closed.